I get a lot of inquiries on Machine Learning about two areas: How does it work, and how will it affect selling insurance? To answer these two questions, I will be writing a two-part article for Broker World explaining the technology and how it’s going to change life insurance processing.

Machine Learning is not new, nor is it the technology that will replace humans. It’s just another tool to be integrated within the business process. Machine Learning (ML) really competes with several areas of today’s core processes we use in the financial service industry of rules-based workflow and document imaging. Rules-based workflow remains people intensive with a short process life span. Document imaging (including all digital pictures) remain less than accurate in their digital transcriptions resulting in dirty data or false/positive classifications. ML though is real and a game changer if you use it right. In part two I’ll expand on how ML will replace rules-based workflow.

Let’s start with the Artificial Intelligence (AI) family tree so we can understand what makes it tick and, for our processes, make sure we’re using its strengths. There are two disciplines of AI, Symbolic Learning and Machine Learning; Symbolic Learning (SL) works with visual input (e.g. pictures and streaming video) and Machine Learning works with data. SL is the science behind self-driving cars and robots. Humans can see with their eyes and hear with their ears and their brains process what’s going on around them to predict how that same environment will change while all the time the brain is storing those events and their outcomes.

At the center of ML, we need to discuss how we learn and how we train machines. There are three types of training: Supervised, Unsupervised and Reinforcement. Think of a newborn child learning how to eat. Is it instinct or learned? Since it takes about six months for the baby to eat solid food by themselves, I believe it was learned. If not, the newborn would know how to feed himself day one. The first six months is Reinforcement training, where the child learns how to put his fist in his month. During the same time Unsupervised training is happening while the baby watches others around them eat. Then around six months you place food in front of him and the baby begins to pick it up. Soon thereafter you introduce the spoon for Supervised training. SL uses all three types of learning in a similar manner for training cars and robots.

Most robotic training is Unsupervised training, which is like Thomas Edison’s famous quote, “I didn’t fail, I learned 10,000 ways that won’t work.” A robot is challenged with putting a ball, attached by a two-foot string, in the cup held by the robot’s hand. The robot repeats the event and alters the energy slightly until the ball lands in the cup. It takes about 99 tries until it’s successful, then it never misses (Reinforcement training). Now if that robot sends the answer (IoT) to another robot with the same ball and cup it would be successful on the first try-Supervised training. This is how robots learn to walk and move around their environment.

ML focuses on data to make a prediction of an outcome. ML divides into two groups called Statistical Learning and Deep Learning. Statistical Learning focuses on speech recognition and natural language processing (i.e., Dragon, Google Voice, Amazon Echo, etc.). Deep Learning (DL) is the field of analyzing complicated data from many inputs and across many dimensions, like a color photograph or a scanned image, and breaking the source into segments to analyze many perspectives, using understanding of mathematics like the change of basis matrix. We call this DL-CNN (Deep Learning-Convolutional Neural Networking); I refer to DL-CNN as the “Study of Pixels.”

Deep Learning is the analysis of an image’s pixels and cataloging the pixels found in the image. The ability to differentiate between an orange and an apple can be deduced by the color, texture and shape; color orange to red, bumps to smooth surface, and circle to trapezoid-like shape. The ability to identify objects with CNN processing has tremendous benefit across many industries like farming, manufacturing, transportation and financial services. The ability to detect the brown spot on an apple or orange and remove the item robotically from shipping would enhance the product’s quality and safety of the public. In manufacturing, quality control processes are being deployed to use cameras to review the work area and ensure the correct number and correct item part numbers were used across an entire assembly of hundreds of parts, improving quality to new levels never seen before. Imagine long-haul trucking being scanned for any damage which may lead to traffic accidents that could then be prevented.

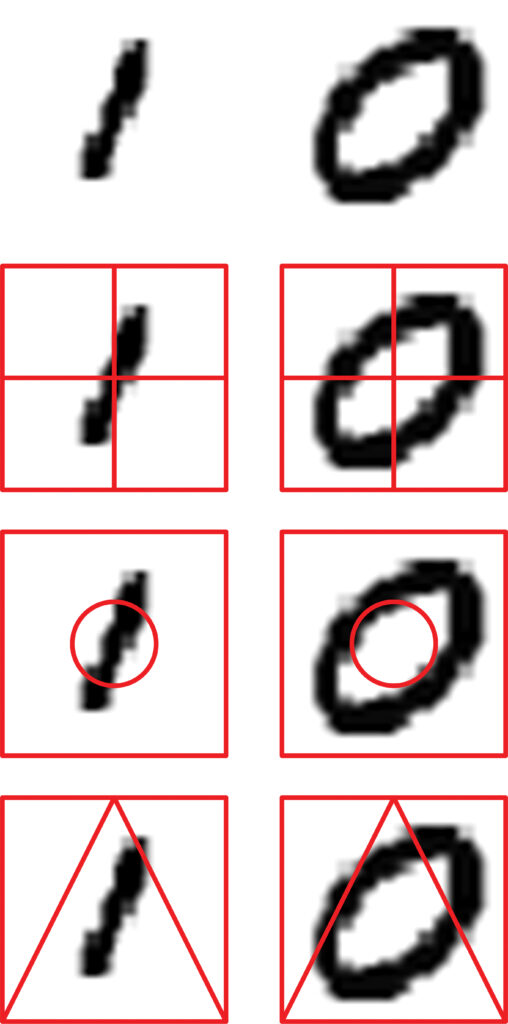

When applying DL-CNN to images of handwriting or machine print with the goal of creating its data equivalent, many algorithms (e.g. perspectives) must be utilized. When analyzing an imaged word or character you basically count pixels based on the K Model (Closest Neighbor Algorithm) from different perspectives. We define a known box around the character we want to recognize and record its pixel size (i.e. 28 pixels by 28 pixels) and call it the “Sample.” Inside the Sample we draw four equal size boxes and count the number of pixels in each quadrant. Then we draw a circle inside the Sample of a known diameter and count the pixels inside and outside of the circle. Then we draw more shapes (perspectives) and record their pixel counts. Each of these pixel counts are then compared to stored Sample pixel counts and closest counts start to build a neighborhood or grouping which begins to narrow down the possible answers to the Sample.

Example: Let’s compare the pixel counts for the numbers 1 (One) and 0 (Zero). If I use the four-quadrant method to count, I would find there is very little difference between the 1 and 0 because the counts are well distributed between the four quadrants, therefore both are still in the running as answers. Now apply the circle method to the number 1 and the inside and outside have pixels; for the number 0 we find most, if not all, the pixels only in the inside or outside of the circle. Now if handed an unknown Sample which returned even pixel counts for the four-quadrant test but only had pixels in the outside results for the circle method, the unknown Sample is probably the number Zero. Now imagine many methods being processed (e.g. the layers of the CNN) and compared to known results (e.g. Training Set) produce their “best guess” on what the number is.

DL is a Supervised Learning model which requires a Training Set (TS) and the larger the TS, the better the results. The most used public TS is MNIST (Modified National Institute of Standards and Technology database) which provides 50,000 handwritten greyscale images of numbers, 5,000 samples per number; MNIST has also released a 250,000-sample database called MNIST Enhanced. These greyscale TSs are very good because they can leverage biometric algorithms (perspective) which can help determine the character written. Think of a winding river with many “S-turns” in it and remember how the inside of the curves collected all the fine sands while the outside of the turn is clean and deeper. When you apply the same behavior to the way people write, their curves behave the same as the stream or river does; less pixels on the inside and more pixels on the outside. This further calculates the pixel distribution and contributes to the DL-CNN predictive answer. Good sources of Training Sets are no surprise: Amazon, Google, IBM, Microsoft all have TSs. To leverage their TSs, or better said not worry about building them, is to subscribe to their ML services (i.e. Amazon SageMaker, Google TensorFlow, IBM Watson, etc.). One thing to remember: They add your images and data to their TS-therefore, if your end solution is subject to compliance limitations, clear its usage first.

ML is a real game changing technology if designed properly and really focused. DL-CNN when applied to imaged text or handwriting can provide different results called “False-Positives” because the answer had a high probability of being right but actually was wrong. Imaged text and handwriting are subject to variables: Good vs bad handwriting, scanning, spelling, form design and people following instructions; we’ve seen ML results vary 50 percent based on these conditions.

Hopefully I’ve shed some light on the technology in play with ML and how it generally works. In part two of the article, I’ll discuss how the life insurance community can leverage ML to produce better processes and deliver the right insurance to the right individual.